Inochi2D - Free Open Source 2D VTuber Avatar Rigging and Puppeteering Software (Part 2 - Inochi2D Session)

The two sides of the software are still very much in development and the documentation, particularly for Session, is very thin on the ground. To the point where I don't think I could even do a comprehensive tutorial because I'm not sure I'm even doing things right, and the software could change significantly in a single update.

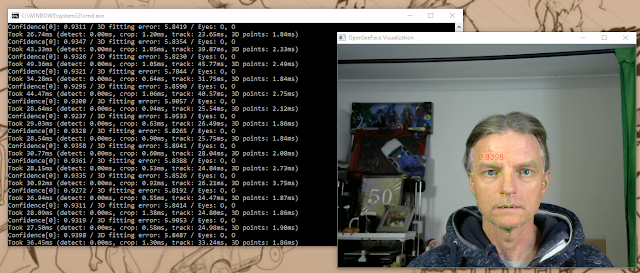

As a result, in this part of my Inochi2D deep dive I'm changing tact from presenting my finished Cartoon Animator TET Avatar, and will be summarizing my experience of getting Session up and running using OpenSeeFace as the recommended webcam motion capture software.

To do this I will be using the TET avatar I created in my review of Mannequin, since that can be exported as a full, ready to go rig, for use with Session, bypassing Inochi2D Creator altogether. If you want to give this a try the free version of Mannequin is all you need.

Before You Start Review Your Mannequin Avatar

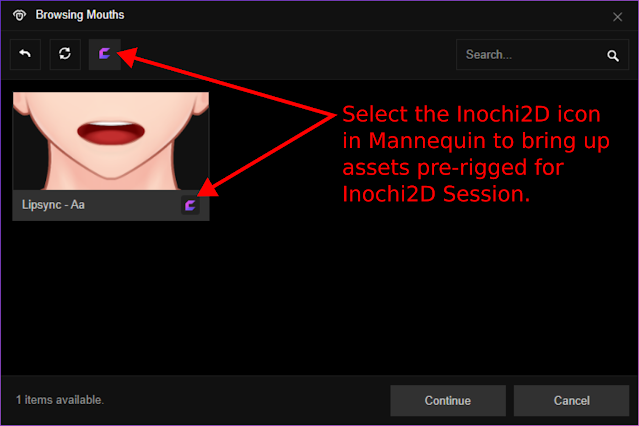

In the course of writing this article I came to realize, if you want your Mannequin Avatar to be fully motion capture rigged for Inochi2D Session, you need to make sure you use assets that are pre-rigged for Inochi2D. This means, when putting your avatar together, you should specifically filter the various asset galleries using the Inochi2D logo. For example there is only one mouth in Mannequin you can use if you want your character to lip sync in Session.

Setting Up My Mannequin TET Avatar in Session

To get my character working in Session I followed the video tutorial (below) for Mannequin on how to set up your character in Inochi2D Session using a webcam.

Note that this tutorial is a continuation of a previous Mannequin video tutorial that you should watch the end of for instructions on how to connect Session to OBS for livestreaming.

| On the Export tab of Mannequin these are the settings you need to pay attention to. |

The first step is to export your finished character from Mannequin. Set the file type to .INX for Inochi2D. You will then have a choice of which motion capture method you want to choose.

When Session started a bunch of setting windows are already open (stacked on top of each other). If not you can turn them on under the View menu. Move the tracking window over one of the side tabs that appear when you click on its title bar and drag it to attach it to the side of the window. You'll be using this a lot to fine tune your model.

Through the Scene settings window you can add a background to your scene, or make it one of four colors you may want to chroma key out in OBS, so you can have your avatar stand in front of things etc.

|

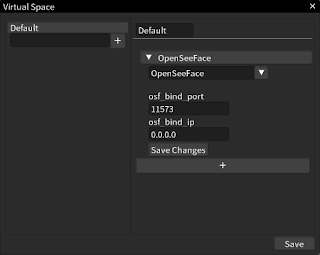

| Inochi2D Session's Virtual Space window set up for OpenSeeFace. |

If this is your first time using session you'll need to set up a space by typing any name into the box and hitting the '+' button. Now select the space you just created. You'll see it appear alongside with a plus button for you to click.

Click it and select OpenSeeFace from the drop down box. Then enter 11573 into the osf_bind_port box and 0.0.0.0 into the osf_bind_ip box. Click Save Changes then click Save on the whole Virtual Space Window.

If you're standing in front of your camera you should see your avatar starting to react to your movements. If you're not seeing any action in the tracking box click on your avatar to select it, and you should see the tracking box (and blend shapes window) light up with info.

The final step is to fine tune all the tracking settings to minimize the amount of jitter you may be seeing. This is done by increasing the dampen setting for each attribute. Once you're happy click the Save to File button at the top of the tracking window to have all the tracking info saved with your Avatar (so you won't have to adjust all the tracking each time).

From here you're good to go. Follow the first Mannequin tutorial I mentioned above for how to connect Session up to OBS.

Note, Session will allow you to open more than one avatar at a time and they will both move in unison from the same motion capture feed. I presume it must be possible to have multiple motion capture sources so that you can, potentially, have two or more people operating separate avatars on one live stream.

My Cartoon Animator TET Avatar

Just to finish up this series on VTuber software and Inochi2D I will give you a quick update on my own TET, Cartoon Animator Avatar, that I was trying to rig in this software.

While I never rigged the full character for Inochi2D, the rig for just switching the mouth sprites I was working on in part 1 of this series, I was able to test in Session.

Initially it worked the way it did in Inochi2D Creator with the mouth shapes dissolving between sprites. Which obviously wasn't useable, even though the dissolve was quick, it was very noticeable.

I then started playing around with the 'tracking out' settings in Session for the Mouth Open Tracking and fixed it just by switching the second tracking out number to one or higher. The mouth sprites switched exactly as expected and looked great.

| On the left is my TET Avatar in mid mouth sprite change with the crossfade effect. Increasing the Tracking out maximum to one or more resolved this for a clean, instant sprite switch. |

I didn't take my own Cartoon Animator TET Avatar any further for this blog post series because I need a lot of time to just tinker around with how to actually rig it, and to learn how the various options correlate to the motion capture (not to mention adding in auto motions). Time I just don't have in the space of a couple of weeks.

If you want to see what's possible with an Inochi2D Avatar try one of Inochi2D's demo models in Session. Hook all the tracking up to the various parameters. Or even try a female Mannequin avatar in Session (since it will already be rigged and ready to go).

The thing to take away, if you want to use character templates from Cartoon Animator as a source of sprites for an Inochi2D Avatar, it is certainly possible, and you can sprite switch the mouth, rather than creating a new mouth in the more typical VTuber style of deforming the lips in front of an interior mouth sprite.

If I get my Avatar up and running I will no doubt add a third post to this blog series. For now, There is enough here to make up for the lack of documentation for Session, and the documentation for Creator should be more than enough to help you rig your characters.

I think I've focused on VTuber software more than enough and it's time to move on to other animation and video related topics.

Comments

Post a Comment

This blog is monitored by a real human. Generic or unrelated spam comments with links to sites of dubious relativity may be DELETED.

I welcome, read, and respond to genuine comments relating to each post. If your comment isn't that save me some time by not posting it.