Illustrating Dystopian Future Poems with Stable Diffusion and Dall.e 2 AI's - Comparing Directed with Non Directed Output

|

| Her addiction was the feeling of scoring a bargain. Image by Hugging Face Stable Diffusion Demo based on a prompt by David Arandle (TET). |

Far from being fearful of AI image generators like Dall.e 2 and Stable Diffusion I feel a new skill set is emerging for artists who embrace the technology. Chiefly among those skills is the ability to write 'quality' text inputs, and the ability to curate the best outputs i.e. images, if they are being fed into a bigger project or art piece.

Case in point. One of my FB friends shared an article by Jesus Diaz, AI was made to turn David Bowie songs into surreal music videos, in which YouTuber aidontknow fed the lyrics of Bowie's Space Oddity into Midjourney AI and then curated the resulting images into a video clip for the song (embed below).

While aidontknow says they used minimal changes to the lyrics, such as to clarify characters being spoken about in the song, from my experience you don't get quite that cohesive range of images without also suggesting an art style and give more detailed direction such as camera shots etc.

Regardless, this inspired me to revive one of a series of dystopian future poems I wrote between 2005 and 2006 dealing with the human condition, virtually reality consumerism, and AIs. The poem is titled, Rachel. The video of me reciting it, is actually the very first entry in this blog (because this blog was initially going to an art piece telling the story of those poems. If you read that post it's actually the start of a story rather than a blog post).

One at a time I entered each line from my ten line poem into both Dream Studio's Stable Diffusion AI and Dall.e 2's AI with no modification to the lines other than appending; An Oil painting the style of Blade Runner the movie. Wide angle lens to the end of each prompt. This was to give every image a unified look and, when I wrote the poems, I always imagined a Blade Runner style to the art.

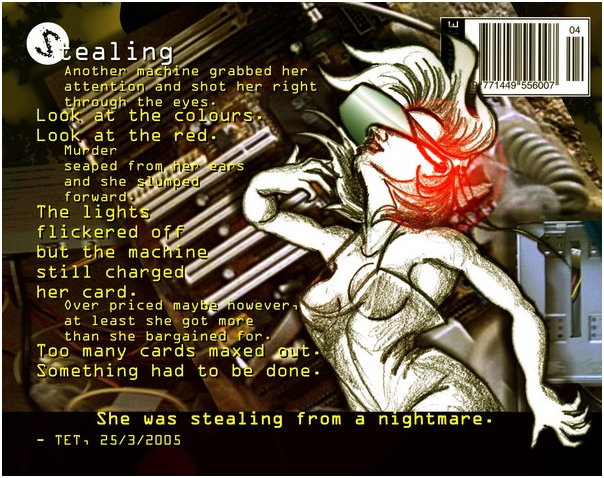

You can see this Blade Runner influence in the digital art image I created for another of the poems (below) called, Stealing. This image is the first and only complete artwork I made at the time.

|

| Stealing. One of nine dystopian future poems written by TET in 2005-2006. Art by TET |

Incidentally you can read more about my whole concept, and read another of the poems, The Fabulous Machine, in my TET Life blog article, Virtual Reality Addiction Meets Online Shopping and Death! I digress.

Feeding my ten lines into both AI's, for each line I generated eight images with DreamStudio (which was free at the time) and four with Dall.e 2, which I only had enough free credit left to enter nine of my ten lines.

I curated the best images from both AI's into a video presentation that includes the poems words, plus YouTube library music and other sound effects. Hopefully it gives you a sense of the poem, its mood, and what it's trying to convey.

Most of the more polished, cleaner looking, images are by Dream AI, which tended to fixate on the neon lit darkness of the city depicted in Blade Runner. While the more painterly images are Dall.e 2's, which must feel the textured oil paint look is the definition of 'oil painting'.

At this point I thought I was going to finish the project but then I started to wonder, how would my video presentation look with more directed images that actually describe more of the type of image I had in mind for each line of the poem?

For example, for the first line of the poem I originally entered this prompt:

Rachael patched in a circuit wired to her brain. An Oil painting the style of Blade Runner the movie.

For my second video presentation I entered this prompt:

Rachael, sitting on her bed, wearing a VR headset wired to a computer in her cyberpunk style bedroom, patched in a circuit wired to her brain. An Oil painting the style of Blade Runner the movie. Wide angle lens.

As you can see, a lot more detailed and not the exact line verbatim. Below is a side by side comparison of what I feel is the best produced image for each prompt, both generated by Dream AI.

It's entirely subjective on which image is a better interpretation of the first line. Especially as what I envisioned in my head is not necessarily the same vision anyone would imagine, reading my poem for the first time, because no one else has all the additional context I do.

Below is my updated video presentation, using the best images generated with my more detailed input prompts (same music and sound effects just to save time).

Note that I didn't use Dall.e 2 this time because I didn't want to pay for more credit. I also didn't use Dream's AI directly either for similar credit issues (I'm not like Rachael, spending all my money on zeroes and ones for fun). Instead I used Hugging Faces demo version of Stable Diffusion which is slower but essentially the same AI with a few less settings, and completely free at the time of writing this. (Insert rant here about all these AI's putting up pay walls rather than going the free, ad supported route).

Anyway, what do you think of my second video presentation?

Creating works like this really does show that the human element of generating quality prompts is very much a skill to be learned, as is the curation of the output. Not every prompt produces the results you are hoping for. Particularly if the AI fixates on the wrong part of a prompt as the main subject to highlight.

Several times in my second presentation I completely scrapped detailed prompts that I thought should get good results but were just producing garbage (never more is the computing quote "garbage in, garbage out" personified than with text to image AI generators).

As I said in my previous musing on AI's, Is Your Next Design or Writing Partner an AI?, these algorithms do not actually think for themselves. Even if you were to use a writing AI to randomly generate prompts for an image AI neither would have any concept of the output as an abstract concept, or how that concept might relate to other prompts. The human element is still key in getting the best images.

I'm tempted to try this with all my poems in this series. It seems very appropriate to use AI to generate images for poems about AI and how humans are finding more ways to hook themselves into 'the machine' for longer and longer periods at a time (not to mention the rise of corporate money machines, passively draining your bank account - did I mention all the text to image AI's being put behind paywalls yet?).

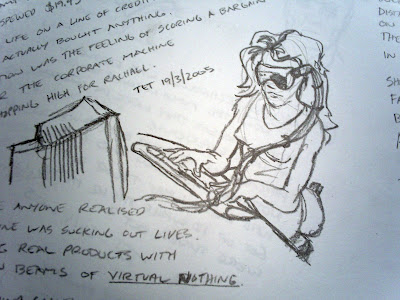

|

| One of my original concept sketches for Stealing drawn alongside the poem in 2005. |

The question is, now that I've been influenced by AI text to image generators, could I even produce what I had in mind back in 2005?

Comments

Post a Comment

This blog is monitored by a real human. Generic or unrelated spam comments with links to sites of dubious relativity may be DELETED.

I welcome, read, and respond to genuine comments relating to each post. If your comment isn't that save me some time by not posting it.